HandVoxNet++: 3D Hand Shape and Pose Estimation

using Voxel-Based Neural Networks

Abstract

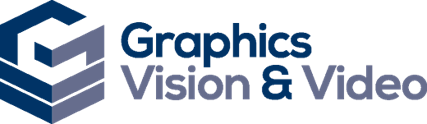

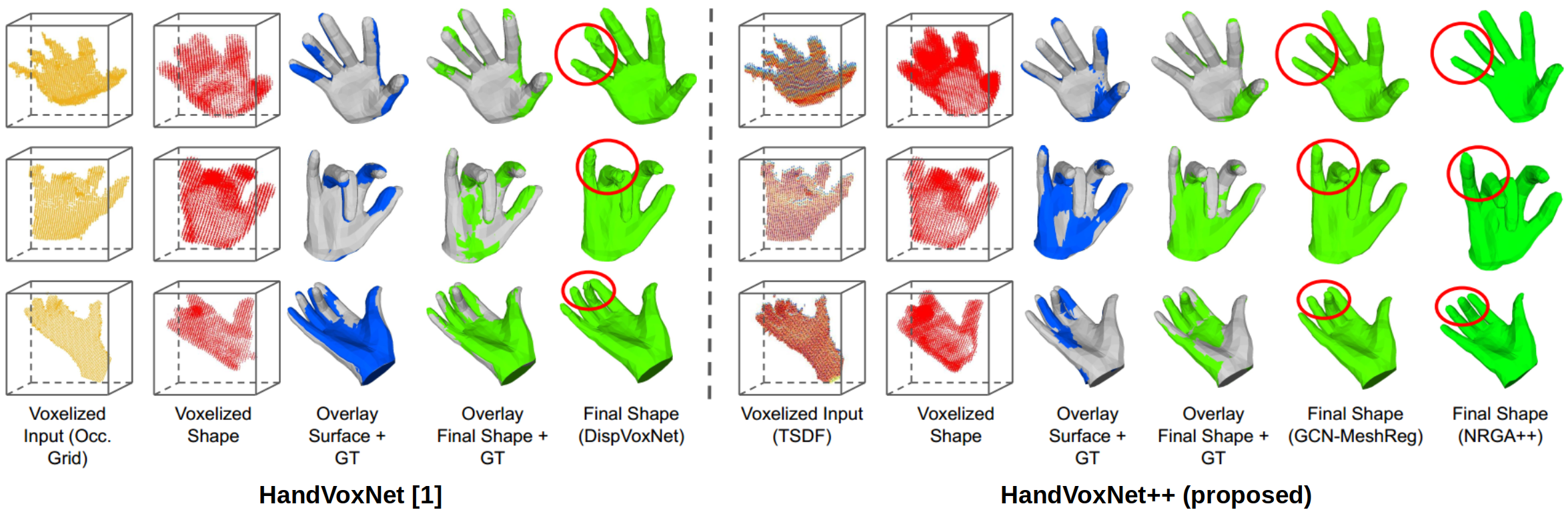

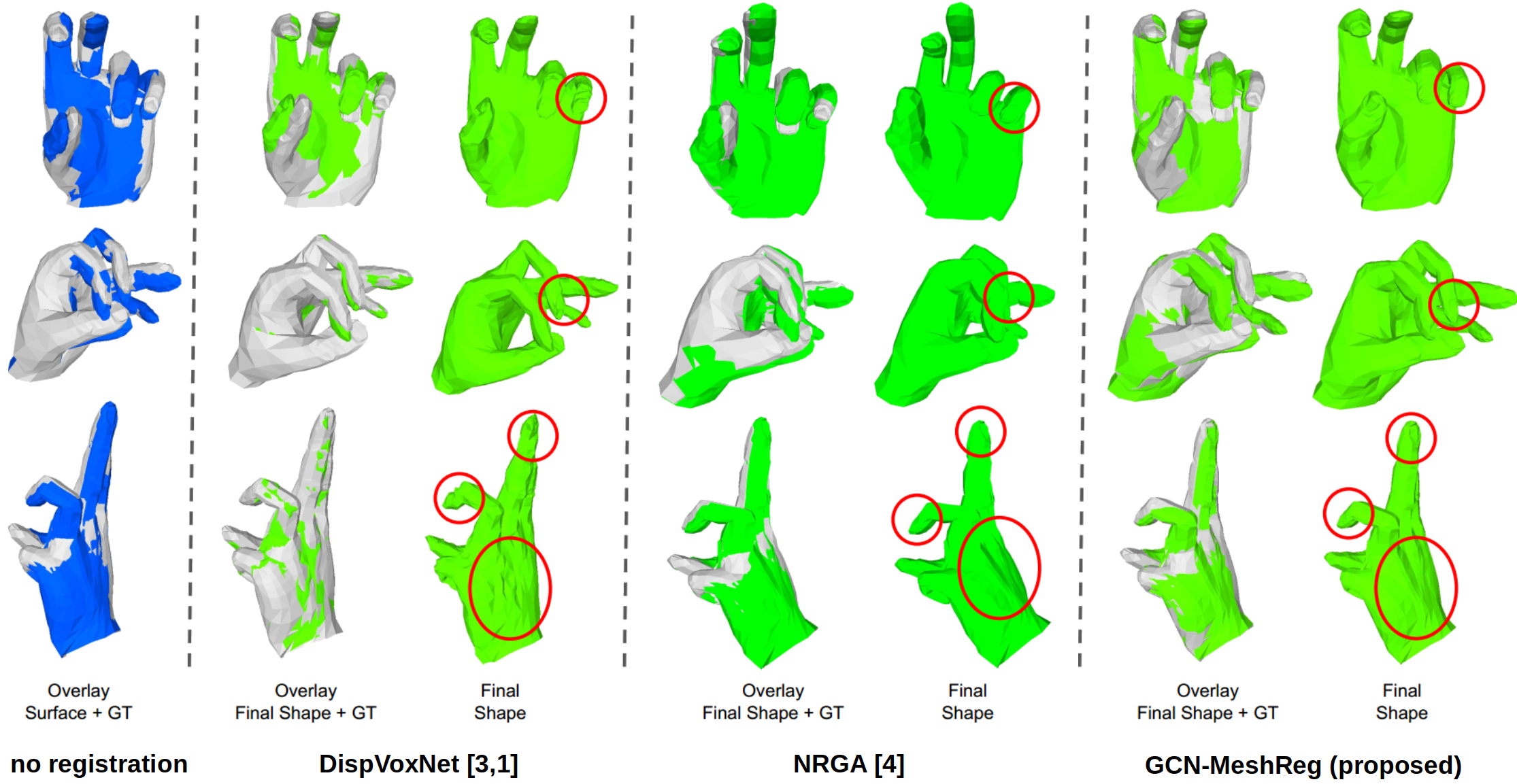

3D hand shape and pose estimation from a single depth map is a new and challenging computer vision problem with many applications. Existing methods addressing it directly regress hand meshes via 2D convolutional neural networks, which leads to artifacts due to perspective distortions in the images. To address the limitations of the existing methods, we develop HandVoxNet++, i.e., a voxel-based deep network with 3D and graph convolutions trained in a fully supervised manner. The input to our network is a 3D voxelized-depth-map-based on the truncated signed distance function (TSDF). HandVoxNet++ relies on two hand shape representations. The first one is the 3D voxelized grid of hand shape, which does not preserve the mesh topology and which is the most accurate representation. The second representation is the hand surface that preserves the mesh topology. We combine the advantages of both representations by aligning the hand surface to the voxelized hand shape either with a new neural Graph-Convolutions-based Mesh Registration (GCN-MeshReg) or classical segment-wise Non-Rigid Gravitational Approach (NRGA++) which does not rely on training data. In extensive evaluations on three public benchmarks, i.e., SynHand5M, depth-based HANDS19 challenge and HO-3D, the proposed HandVoxNet++ achieves the state-of-the-art performance. In this journal extension of our previous approach presented at CVPR 2020, we gain 41.09% and 13.7% higher shape alignment accuracy on SynHand5M and HANDS19 datasets, respectively. Our method is ranked first on the HANDS19 challenge dataset (Task 1: Depth-Based 3D Hand Pose Estimation) at the moment of the submission of our results to the portal in August 2020.

Downloads

What is new in HandVoxNet++ Compared to HandVoxNet [1]?

- A new TSDF-based voxel-to-voxel network for 3D hand pose estimation (Stage 1). Our TSDF-based depth map representation achieves 19.8% improvement in accuracy compared to binary voxelized grids [2].

- CGN-MeshReg (Stage 3) – the first neural method with graph convolutions for aligning a hand mesh to a 3D voxelized hand shape. CGN-MeshReg significantly outperforms DispVoxNet used in our previous method [1].

- An iterative refinement policy for shape registration (Stage 3) which significantly improves the accuracy and runtime compared to [1].

- Segmentwise NRGA++ approach for hands (Stage 3) which runs two orders of magnitude faster than NRGA [4].

References

[1] J. Malik et al. HandVoxNet: Deep Voxel-Based Network for 3D Hand Shape and Pose Estimation from a Single Depth Map. In CVPR, 2020. [2] G. Moon et al. V2V-PoseNet: Voxel-to-Voxel Prediction Network for Accurate 3D Hand and Human Pose Estimation from a Single Depth Map. In CVPR, 2018. [3] S. Shimada et al. DispVoxNets: Non-Rigid Point Set Alignment with Supervised Learning Proxies. In 3DV, 2019. [4] S. A. Ali et al. NRGA: Gravitational Approach for Non-Rigid Point Set Registration. In 3DV, 2018.Citation

@inProceedings{HandVoxNet++2021,

author = {Jameel Malik and Soshi Shimada and Ahmed Elhayek and Sk Aziz Ali and Christian Theobalt and Vladislav Golyanik and Didier Stricker},

title = {HandVoxNet++: 3D Hand Shape and Pose Estimation using Voxel-Based Neural Networks},

booktitle = {arXiv},

year = {2021}

} Acknowledgments

Contact

For questions, clarifications, please get in touch with:Soshi Shimada sshimada@mpi-inf.mpg.de Vladislav Golyanik golyanik@mpi-inf.mpg.de