State-of-the-Art Report

Advances in Neural Rendering

Abstract

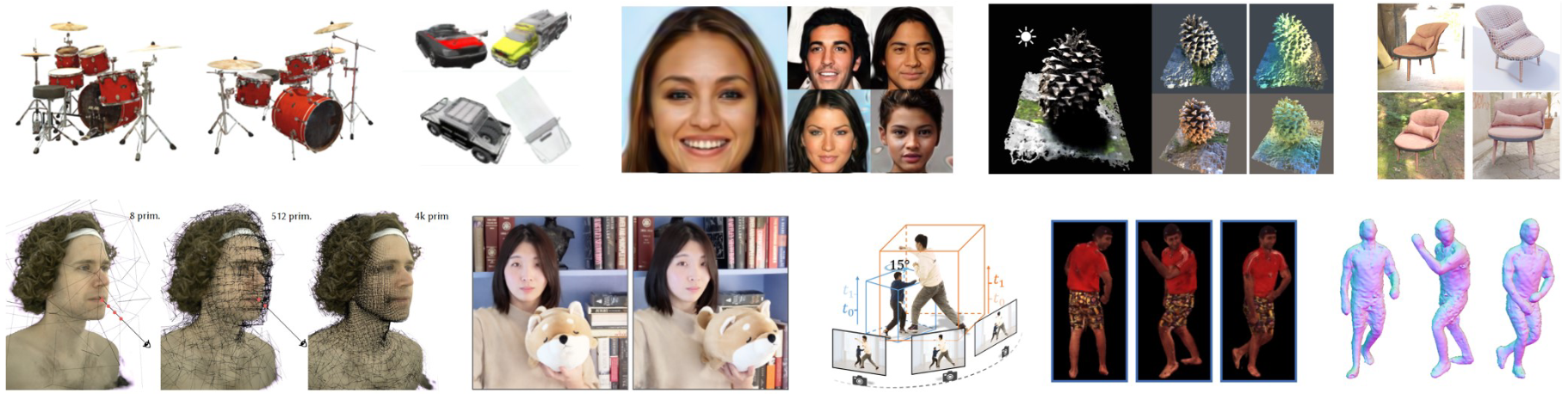

Synthesizing photo-realistic images and videos is at the heart of computer graphics and has been the focus of decades of research. Traditionally, synthetic images of a scene are generated using rendering algorithms such as rasterization or ray tracing, which take specifically defined representations of geometry and material properties as input. Collectively, these inputs define the actual scene and what is rendered, and are referred to as the scene representation (where a scene consists of one or more objects). Example scene representations are triangle meshes with accompanied textures (e.g., created by an artist), point clouds (e.g., from a depth sensor), volumetric grids (e.g., from a CT scan), or implicit surface functions (e.g., truncated signed distance fields). The reconstruction of such a scene representation from observations using differentiable rendering losses is known as inverse graphics or inverse rendering. Neural rendering is closely related, and combines ideas from classical computer graphics and machine learning to create algorithms for synthesizing images from real-world observations. Neural rendering is a leap forward towards the goal of synthesizing photo-realistic image and video content. In recent years, we have seen immense progress in this field through hundreds of publications that show different ways to inject learnable components into the rendering pipeline. This state-of-the-art report on advances in neural rendering focuses on methods that combine classical rendering principles with learned 3D scene representations, often now referred to as neural scene representations. A key advantage of these methods is that they are 3D-consistent by design, enabling applications such as novel viewpoint synthesis of a captured scene. In addition to methods that handle static scenes, we cover neural scene representations for modeling non-rigidly deforming objects and scene editing and composition. While most of these approaches are scene-specific, we also discuss techniques that generalize across object classes and can be used for generative tasks. In addition to reviewing these state-of-the-art methods, we provide an overview of fundamental concepts and definitions used in the current literature. We conclude with a discussion on open challenges and social implications.

Extended Talk

Downloads

Citation

@article{Tewari2022NeuRendSTAR,

author = {Tewari, A. and Thies, J. and Mildenhall, B. and Srinivasan, P. and Tretschk, E. and Yifan, W. and Lassner, C. and Sitzmann, V. and Martin-Brualla, R. and Lombardi, S. and Simon, T. and Theobalt, C. and Nie{\ss}ner, M. and Barron, J. T. and Wetzstein, G. and Zollh{\"o}fer, M. and Golyanik, V.},

title = {{Advances in Neural Rendering}},

journal = {Computer Graphics Forum (EG STAR 2022)},

year = {2022}

}

Acknowledgments

A. Tewari, V. Golyanik, and C. Theobalt are supported in part by the ERC Consolidator Grant 4DReply (770784). E. Tretschk is supported by a Reality Labs Research grant. M. Nießner is supported by the ERC Starting Grant Scan2CAD (804724).

Contact

For questions, clarifications, please get in touch with:Ayush Tewari

Edith Tretschk tretschk@mpi-inf.mpg.de

Vladislav Golyanik golyanik@mpi-inf.mpg.de