Our learnable coordinate-based QVF model can represent various visual fields: (a) Schematic diagram of the architecture; (b) 2D image representation (source: NASA/James Webb Space Telescope) of a moderate resolution (400×350 pixels); (c) Latent space interpolation of 3D signed distance fields (shape source: ShapeNet, Chang et al., 2015).

Quantum Implicit Neural Representations (QINRs) include components for learning and execution on gate-based quantum computers. While QINRs recently emerged as a promising new paradigm, many challenges concerning their architecture and ansatz design, the utility of quantum-mechanical properties, training efficiency and the interplay with classical modules remain. This paper advances the field by introducing a new type of QINR for 2D image and 3D geometric field learning, which we collectively refer to as Quantum Visual Field (QVF). QVF encodes classical data into quantum statevectors using neural amplitude encoding grounded in a learnable energy manifold, ensuring meaningful Hilbert space embeddings. Our ansatz follows a fully entangled design of learnable parametrised quantum circuits, with quantum (unitary) operations performed in the real Hilbert space, resulting in numerically stable training with fast convergence. QVF does not rely on classical post-processing—in contrast to the previous QINR learning approach—and directly employs projective measurement to extract learned signals encoded in the ansatz. Experiments on a quantum hardware simulator demonstrate that QVF outperforms the existing quantum approach and widely used classical foundational baselines in terms of visual representation accuracy across various metrics and model characteristics, such as learning of high-frequency details. We also show applications of QVF in 2D and 3D field completion and 3D shape interpolation, highlighting its practical potential.

QVF targets learning an implicit representation of multi-dimensional (2D or 3D) visual signals by optimising a data-conditioned quantum circuit to minimise the reconstruction loss: \[ \mathcal{L}(\theta) = \sum_{\mathbf{x} \in \mathcal{X}} \| f_{\theta}(\mathbf{x}) - \mathcal{S}(\mathbf{x}) \|^2, \] where \(\mathcal{X}\) denotes the sampled domain, and \(\mathcal{S}(\mathbf{x})\) is the target signal value to be represented by \(f_{\theta}(\mathbf{x})\). The function \(f_{\theta}(\mathbf{x})\) is realised through a coordinate-based gate-based quantum circuit: query coordinates are encoded into a quantum state in Hilbert space via learnable Boltzmann-regulated energy-based amplitude encoding, followed by further parametrised quantum evolution. The circuit output is extracted via projective measurements of the Pauli-Z observable.

We provide a visualisation of a learning dynamics of a lightweight QVF on a CIFAR-10 image instance reconstruction by monitoring the Peak Signal-to-Noise Ratio (PSNR) metrics with explicit parameterisation details given for each model. QVF achieves significantly faster convergence with better detail preservation compared to the classical counterpart (without enhancement of the quantum circuit). Notably, QVF attains a reconstruction quality of 30dB PSNR in ~170 iterations in contrast to 400 iterations required by a classical counterpart of analogous parameterisation, demonstrating a 2.35-fold improvement in training efficiency. To attain a reconstruction quality of 35dB PSNR, QVF takes ~300 iterations while the classical counterpart takes ~800 iterations, yielding a speed-up of almost 3x.

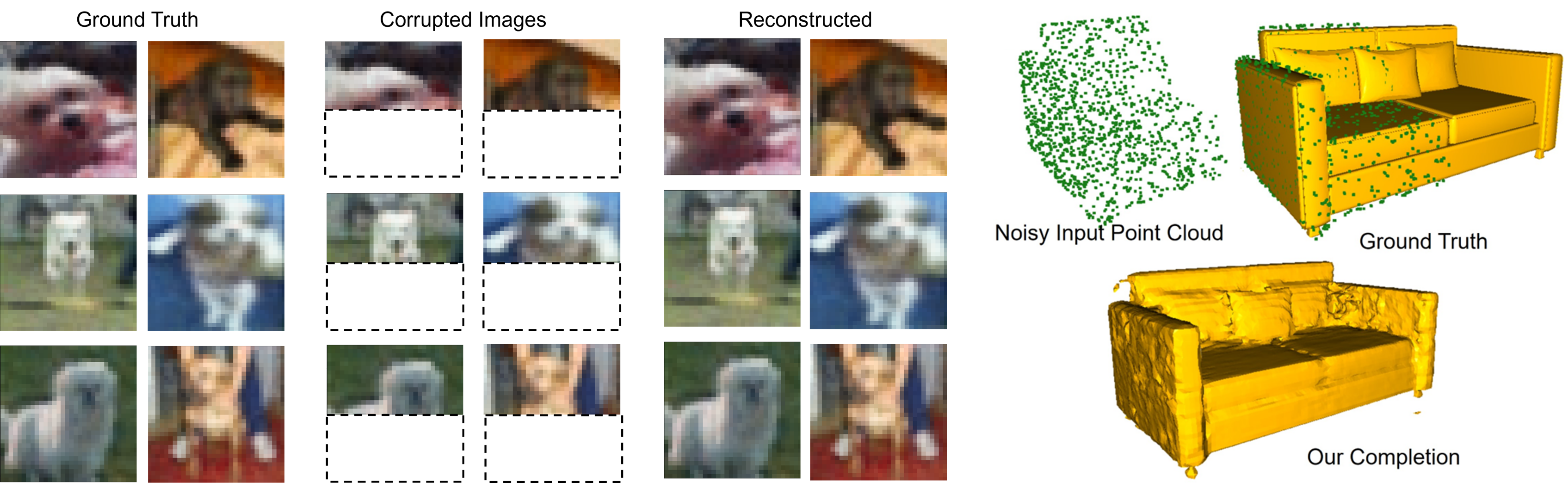

QVF can simultaneously represent multiple images. See also high-resolution reconstructed images in the teaser on top of this page in (c).

An interactive visualisation of the geometry.

QVF can simultaneously represent multiple 3D geometries (signed distance fields). We experiment with the ShapeNet dataset (Chang et al., 2015).

QVF enables smooth interpolation between learnt representations by leveraging a latent-conditioned quantum circuit, where each representation is associated with a latent code. During inference, linear interpolation in the latent space: \begin{equation} \mathbf{z}(t) = (1-t)\mathbf{z}_1 + t\mathbf{z}_2 \quad \text{for} \quad t \in [0,1] \end{equation} generates intermediate quantum states, whose measurements after evolution yield coherent interpolations of encoded representations. We show the interpolation of 3D geometries in the video, which can allow further downstream tasks:

QVF can complete images and geometries based on partial observations. (Left): Completion on images, i.e. image inpainting; (right) completion on geometries.

In practical quantum hardware implementations, the output of a quantum circuit is obtained through repeated projective measurements, i.e. shots, resulting in stochastic outcomes due to the inherent probabilistic nature of quantum mechanics. We analyse this process and demonstrate that increasing the number of measurement shots reduces the variance in the observed output, leading to more reliable statistical estimates.

We provide an ablation study of QVF's architectural efficiency from the following considerations: 1) circuit width, i.e. qubit count; 2) circuit depth and 3) classical energy inference module complexity; see details below. J and n describe the width and depth of the quantum circuit. p describes the latent space dimension of the classical backbone, which determines its expressivity, i.e. how well it can approximate the hidden spectrum. QVF's performance is determined by a balance between the quantum circuit that efficiently explores high-dimensional feature space and the classical component providing spectrally-aligned priors.

@inproceedings{Wang2025QVF,

title={Quantum Visual Fields with Neural Amplitude Encoding},

author={Wang, Shuteng and Theobalt, Christian and Golyanik, Vladislav},

booktitle={Neural Information Processing Systems (NeurIPS)},

year={2025}

}