EventNeRF: Neural Radiance Fields from a Single Colour Event Camera

Abstract

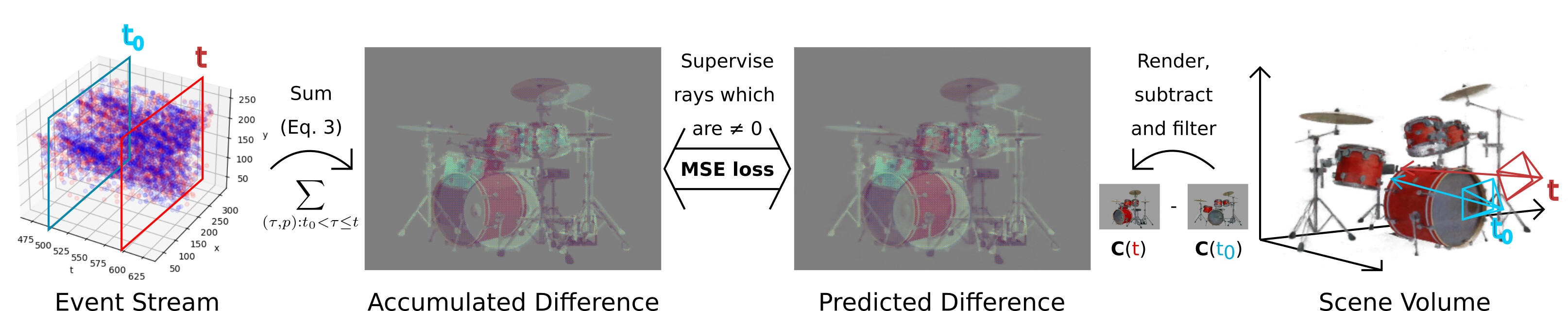

Asynchronously operating event cameras find many applications due to their high dynamic range, no motion blur, low latency and low data bandwidth. The field has seen remarkable progress during the last few years, and existing event-based 3D reconstruction approaches recover sparse point clouds of the scene. However, such sparsity is a limiting factor in many cases, especially in computer vision and graphics, that has not been addressed satisfactorily so far. Accordingly, this paper proposes the first approach for 3D-consistent, dense and photorealistic novel view synthesis using just a single colour event stream as input. At the core of our method is a neural radiance field trained entirely in a self-supervised manner from events while preserving the original resolution of the colour event channels. Next, our ray sampling strategy is tailored to events and allows for data-efficient training. At test, our method produces results in the RGB space at unprecedented quality. We evaluate our method qualitatively and quantitatively on several challenging synthetic and real scenes and show that it produces significantly denser and more visually appealing renderings than the existing methods. We also demonstrate robustness in challenging scenarios with fast motion and under low lighting conditions. We will release our dataset and our source code to facilitate the research field.

Baseline

Download Video: HD (MP4, 3 MB)

Figure 2: As the baseline we use the approach of first recovering traditional RGB frames from events using E2VID and then learning regular NeRF with them as the training data. Note that such approach does not account for the sparsity and asynchronousity of the event stream. Hence, they need much more memory and disk space to store all the reconstructed views. Moreover, there is a limit to how short the E2VID window can be made, and hence to the number of reconstructed views. In contrast, EventNeRF respects the asynchronous nature of event streams and reconstructs the neural representation directly from the event stream. Moreover, it can use an arbitrary number of windows, which allows it to reconstruct the scene even from 3% of the data, as we show in Sec. 4.5. That makes it significantly more scalable than first reconstructing the frames and using traditional NeRF.

Synthetic data results

Download Videos: first (MP4, 3 MB) second (MP4, 3 MB)

Figure 3: We compare against the approach of first recovering traditional RGB frames from events using E2VID [36] and then learning regular NeRF with them as the training data. Our method clearly outperforms the E2VID+NeRF approach in all metrics and on all sequences and resembles the ground truth better.

| E2VID+NeRF | Our EventNeRF | |||||

|---|---|---|---|---|---|---|

| Scene | PSNR ↑ | SSIM ↑ | LPIPS ↓ | PSNR ↑ | SSIM ↑ | LPIPS ↓ |

| Drums | 19.71 | 0.85 | 0.22 | 27.43 | 0.91 | 0.07 |

| Lego | 20.17 | 0.82 | 0.24 | 25.84 | 0.89 | 0.13 |

| Chair | 24.12 | 0.92 | 0.12 | 30.62 | 0.94 | 0.05 |

| Ficus | 24.97 | 0.92 | 0.10 | 31.94 | 0.94 | 0.05 |

| Mic | 23.08 | 0.94 | 0.09 | 31.78 | 0.96 | 0.03 |

| Hotdog | 24.38 | 0.93 | 0.12 | 30.26 | 0.94 | 0.04 |

| Materials | 22.01 | 0.92 | 0.13 | 24.10 | 0.94 | 0.07 |

| Average | 22.64 | 0.90 | 0.15 | 28.85 | 0.93 | 0.06 |

Table 1: Comparing our method against E2VID+NeRF. Our method consistently produces better results.

Real data capture setup

Figure 4: Real data recording setup. The object is placed on a 45 RPM direct-drive vinyl turntable and lit by a ring light mounted directly above it. The scene is recorded with a DAVIS 346C colour event camera (right bottom).

Download Video: HD (MP4, 1 MB)

Figure 5: Showing the fact that our real sequences were recorded under low lighting conditions. Here, we show the RGB stream of the event camera that was used for recording the real sequences. These sequences were recorded using just a 5W USB light source. We tone map the real sequences in the remaining figures that use this RGB stream for clarity. Note that EventNeRF only uses the event stream and does not use the RGB stream.

Real data results

Download Video: HD (MP4, 4 MB)

Figure 6: Real data results. In the “Plant” scene, we can reconstruct every stem and thin leaf. In the “Sewing” scene, we recover even a one-pixel-wide needle of the machine (best viewed with zoom). In the “Microphone” scene, we can reconstruct fine details such as the microphone grid. In the “Controller” scene, we preserve its details in the dark regions despite having low contrast. Similarly, in the “Goatling” scene, we can reconstruct both the details in the dark and bright highlights on the glasses. In the “Cube” scene, EventNeRF recovers sharp colour details. “Multimeter”, “Cube” and “Sewing” show how we recover view-dependent effects. In the “Bottle” scene, we can see the drawings on the reconstructed label. Note that all our results are halo-free, meaning they have no blurry silhouettes or trails behind the reconstructed object, which is a common artefact in event-based vision research.

Comparison to E2VID/ssl-E2VID+NeRF

Download Video: HD (MP4, 3 MB)

Figure 7: Results generated by different approaches. Our approach EventNeRF clearly outperforms all the methods. E2VID+NeRF produces significant artefacts in dark areas, uniformly-coloured surfaces, and also artefacts due to having a low number of reconstructed views used as NeRF training data. ssl-E2VID+NeRF does not support coloured data, handles short windows poorly, and has significant halos and trails behind the reconstructed object. In contrast, EventNeRF results are coloured, halo-free, detailed in both dark and bright regions, and the method works well with uniformly coloured surfaces.

Comparison to Deblur-NeRF

Download Video: HD (MP4, 3 MB)

Figure 8: We also compare against Deblur-NeRF, i.e., a RGB-based NeRF extraction method designed specifically to handle blurry RGB videos. We perform this comparison by applying Deblur-NeRF on the the RGB stream of the event camera and synthetic RGB frames with blur simulated using the same exposure timestamps as in the real captures. Results, however, show that our approach significantly outperform Deblur-NeRF. This is expected, Deblur-NeRF can only handle view-inconsistent blur. On the other hand, our approach produces almost blur-free results. In addition, it is significantly more memory and computationally efficient. For Deblur-NeRF, 100 training views were used, which took around 22 seconds to record due to the low-light condition (see Fig. 5). Our EventNeRF approach, however, need only one 1.33s revolution of the object. In addition, it converges well within 6 hours using two NVIDIA Quadro RTX 8000 GPUs. This compares favourably to Deblur-NeRF, which needs 16 hours with the same GPU resources. Furthermore, the training time for our method significantly drops with the torch-ngp implementation to just 1 minute using a single NVIDIA GeForce RTX 2070 GPU (see Fig. 12 below).

Ablation studies computed on the Drums

Download Video: HD (MP4, 3 MB)

Figure 9: Importance of our various design choices (see Sec. 4.4). The full model produces the best results.

| Method | PSNR ↑ | SSIM ↑ | LPIPS ↓ |

|---|---|---|---|

| Fixed 50 ms win. | 27.32 | 0.90 | 0.09 |

| W/o neg. smpl. | 26.48 | 0.87 | 0.16 |

| Full EventNeRF | 27.43 | 0.91 | 0.07 |

Table 2: Ablation studies computed on the "Drums".

Data efficiency

Download Video: HD (MP4, 6 MB)

Figure 10: PSNR performance of our EventNeRF on “Drums” with varying event numbers (multiplied by 106 on the x-axis). The images underneath the function graph show exemplary novel views obtained with different numbers of the events. To vary the number of events we only change the event generation threshold, the duration of training data remains constant.

Extracted mesh

Download Mesh: HD (GLB, 14 MB)

Figure 11: Using Marching Cubes, we can extract the learned geometry of "Chair" scene as a coloured mesh. Even though the original resolution of the training data is still only 346x260, the model and the extracted mesh are detailed.

Real-time Demo based on torch-ngp

Download Video: HD (MP4, 5 MB)

Figure 12: We show an interactive application of our approach that runs in real time. For this, we implement our method using torch-ngp instead of NeRF++ used for the main implementation. Training a model using this implementation takes around a minute using a single NVIDIA GeForce RTX 2070 GPU. At test, the method runs in real time. It produces highly photorealistic results that can be viewed from an arbitrary viewpoint. This, however, can come with some trade-off in the rendering quality.

| Our EventNeRF | Our Real-time Implementation | |||||

|---|---|---|---|---|---|---|

| Scene | PSNR ↑ | SSIM ↑ | LPIPS ↓ | PSNR ↑ | SSIM ↑ | LPIPS ↓ |

| Drums | 27.43 | 0.91 | 0.07 | 26.03 | 0.91 | 0.07 |

| Lego | 25.84 | 0.89 | 0.13 | 22.82 | 0.89 | 0.08 |

| Chair | 30.62 | 0.94 | 0.05 | 27.97 | 0.94 | 0.05 |

| Ficus | 31.94 | 0.94 | 0.05 | 26.77 | 0.92 | 0.12 |

| Mic | 31.78 | 0.96 | 0.03 | 28.34 | 0.95 | 0.04 |

| Hotdog | 30.26 | 0.94 | 0.04 | 23.99 | 0.93 | 0.10 |

| Materials | 24.10 | 0.94 | 0.07 | 26.05 | 0.93 | 0.07 |

| Average | 28.85 | 0.93 | 0.06 | 25.99 | 0.92 | 0.07 |

Table 3: Comparing EventNeRF using the NeRF++ implementation against a real-time implementation based on torch-ngp. While the real-time implementation takes significantly less training and testing time, it can compromise on some of the rendering quality.

Narrated Video with Experiments

Download Video: HD (MP4, 49 MB)

Downloads

Citation

@InProceedings{rudnev2023eventnerf,

title={EventNeRF: Neural Radiance Fields from a Single Colour Event Camera},

author={Viktor Rudnev and Mohamed Elgharib and Christian Theobalt and Vladislav Golyanik},

booktitle={Computer Vision and Pattern Recognition (CVPR)},

year={2023}

}

Contact

For questions, clarifications, please get in touch with:Viktor Rudnev

vrudnev@mpi-inf.mpg.de

Vladislav Golyanik

golyanik@mpi-inf.mpg.de

Mohamed Elgharib

elgharib@mpi-inf.mpg.de